mirror of

https://github.com/cloudreve/cloudreve.git

synced 2026-03-03 16:57:02 +00:00

Compare commits

234 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

5b823305d5 | ||

|

|

edf16a9ed8 | ||

|

|

5d915f11ff | ||

|

|

d9baa74c81 | ||

|

|

3180c72b53 | ||

|

|

95865add54 | ||

|

|

9a59c8348e | ||

|

|

846366d223 | ||

|

|

5d45691e43 | ||

|

|

2a59407916 | ||

|

|

a8a625e967 | ||

|

|

153a00ecd5 | ||

|

|

1e3b851e19 | ||

|

|

ec9fdd33bc | ||

|

|

6322a9e951 | ||

|

|

57239e81af | ||

|

|

9dcc82ead8 | ||

|

|

b913b4683f | ||

|

|

1f580f0d8a | ||

|

|

87d48ac4a7 | ||

|

|

5d9cfaa973 | ||

|

|

2241a9e2c8 | ||

|

|

1c5eefdc6a | ||

|

|

c99a4ece90 | ||

|

|

43d77d2319 | ||

|

|

e4e6beb52d | ||

|

|

47218607ff | ||

|

|

5b214beadc | ||

|

|

2ecc7f4f59 | ||

|

|

2725bd47b5 | ||

|

|

864332f2e5 | ||

|

|

a84c5d8e97 | ||

|

|

a908ec462f | ||

|

|

bc6845bd74 | ||

|

|

7039fa801d | ||

|

|

6f8aecd35a | ||

|

|

722abb81c5 | ||

|

|

e8f965e980 | ||

|

|

f01ed64bdb | ||

|

|

736414fa10 | ||

|

|

5924e406ab | ||

|

|

87b1020c4a | ||

|

|

32632db36f | ||

|

|

c01b748dfc | ||

|

|

05c68b4062 | ||

|

|

a08c796e3f | ||

|

|

fec4dec3ac | ||

|

|

67c6f937c9 | ||

|

|

6ad72e07f4 | ||

|

|

994ef7af81 | ||

|

|

b507c1b893 | ||

|

|

deecc5c20b | ||

|

|

6085f2090f | ||

|

|

670b79eef3 | ||

|

|

4785be81c2 | ||

|

|

f27969d74f | ||

|

|

e3580d9351 | ||

|

|

16b02b1fb3 | ||

|

|

6bd30a8af7 | ||

|

|

21cdafb2af | ||

|

|

e29237d593 | ||

|

|

46897e2880 | ||

|

|

213eaa54dd | ||

|

|

e7d6fb25e4 | ||

|

|

e3e08a9b75 | ||

|

|

78f7ec8b08 | ||

|

|

3d41e00384 | ||

|

|

5e5dca40c4 | ||

|

|

668b542c59 | ||

|

|

440ab775b8 | ||

|

|

678593f30d | ||

|

|

58ceae9708 | ||

|

|

3b8110b648 | ||

|

|

f0c5b08428 | ||

|

|

9434c2f29b | ||

|

|

7d97237593 | ||

|

|

a581851f84 | ||

|

|

fe7cf5d0d8 | ||

|

|

cec2b55e1e | ||

|

|

af43746ba2 | ||

|

|

9f1cb52cfb | ||

|

|

4acf9401b8 | ||

|

|

c3ed4f5839 | ||

|

|

9b40e0146f | ||

|

|

a16b491f65 | ||

|

|

a095117061 | ||

|

|

acc660f112 | ||

|

|

a677e23394 | ||

|

|

13e774f27d | ||

|

|

91717b7c49 | ||

|

|

a1ce16bd5e | ||

|

|

872b08e5da | ||

|

|

f73583b370 | ||

|

|

c0132a10cb | ||

|

|

927c3bff00 | ||

|

|

bb9b42eb10 | ||

|

|

5f18d277c8 | ||

|

|

b0057fe92f | ||

|

|

bb3db2e326 | ||

|

|

8deeadb1e5 | ||

|

|

8688069fac | ||

|

|

4c08644b05 | ||

|

|

4c976b8627 | ||

|

|

b0375f5a24 | ||

|

|

48e9719336 | ||

|

|

7654ce889c | ||

|

|

80b25e88ee | ||

|

|

e31a6cbcb3 | ||

|

|

51d9e06f21 | ||

|

|

36be9b7a19 | ||

|

|

c8c2a60adb | ||

|

|

60bf0e02b3 | ||

|

|

488f32512d | ||

|

|

1cdccf5fc9 | ||

|

|

15762cb393 | ||

|

|

e96b595622 | ||

|

|

d19fc0e75c | ||

|

|

195d68c535 | ||

|

|

000124f6c7 | ||

|

|

ca57ca1ba0 | ||

|

|

3cda4d1ef7 | ||

|

|

b13490357b | ||

|

|

617d3a4262 | ||

|

|

75a03aa708 | ||

|

|

fe2ccb4d4e | ||

|

|

aada3aab02 | ||

|

|

a0aefef691 | ||

|

|

17fc598fb3 | ||

|

|

19a65b065c | ||

|

|

e0b2b4649e | ||

|

|

642c32c6cc | ||

|

|

6106b57bc7 | ||

|

|

f38f32f9f5 | ||

|

|

d382bd8f8d | ||

|

|

02abeaed2e | ||

|

|

6c9a72af14 | ||

|

|

4562042b8d | ||

|

|

dc611bcb0d | ||

|

|

2500ebc6a4 | ||

|

|

3db522609e | ||

|

|

d1bbfd4bc4 | ||

|

|

b11188fa50 | ||

|

|

1bd62e8feb | ||

|

|

fec549f5ec | ||

|

|

8fe2889772 | ||

|

|

bdc0aafab0 | ||

|

|

3de33aeb10 | ||

|

|

9f9796f2f3 | ||

|

|

9a216cd09e | ||

|

|

41eb010698 | ||

|

|

9d28fde00c | ||

|

|

40644f5234 | ||

|

|

d6d615e689 | ||

|

|

95d2b5804e | ||

|

|

a517f41ab1 | ||

|

|

e57e11a30e | ||

|

|

5f1b3a2bed | ||

|

|

b5136fc5e4 | ||

|

|

633ea479d7 | ||

|

|

8d6d188c3f | ||

|

|

6561e3075f | ||

|

|

e750cbfb77 | ||

|

|

3ab86e9b1d | ||

|

|

e2dbb0404a | ||

|

|

2a7b46437f | ||

|

|

fe309b234c | ||

|

|

522fcca6af | ||

|

|

c13b7365b0 | ||

|

|

51fa9f66a5 | ||

|

|

65095855c1 | ||

|

|

ec53769e33 | ||

|

|

9a96a88243 | ||

|

|

e0b193427c | ||

|

|

1fa70dc699 | ||

|

|

db7b54c5d7 | ||

|

|

c6ee3e5dcd | ||

|

|

9f5ebe11b6 | ||

|

|

1a3c3311e6 | ||

|

|

acffd984c1 | ||

|

|

7ddb611d6c | ||

|

|

7bace40a4d | ||

|

|

2fac086127 | ||

|

|

a10a008ed7 | ||

|

|

5d72faf688 | ||

|

|

0a28bf1689 | ||

|

|

bdaf091aca | ||

|

|

d60c3e6bf4 | ||

|

|

1e2cfe0061 | ||

|

|

71a624c10e | ||

|

|

1b8beb3390 | ||

|

|

762811d50f | ||

|

|

edd50147e7 | ||

|

|

006bcabcdb | ||

|

|

10e3854082 | ||

|

|

fbf1d1d42c | ||

|

|

c5467f228a | ||

|

|

2a6a43d242 | ||

|

|

6e82ce2a9d | ||

|

|

a0b4c97db0 | ||

|

|

2333ed3501 | ||

|

|

67d3b25c87 | ||

|

|

ca47f79ecb | ||

|

|

77ae381474 | ||

|

|

c6eef43590 | ||

|

|

d8fc81d0eb | ||

|

|

d195002bf7 | ||

|

|

d6496ee9a0 | ||

|

|

55a3669a9e | ||

|

|

969e35192a | ||

|

|

224ac28ffe | ||

|

|

cc69178310 | ||

|

|

9226d0c8ec | ||

|

|

7b5e0e8581 | ||

|

|

d60e400f83 | ||

|

|

21d158db07 | ||

|

|

da4e44b77a | ||

|

|

3373b9dc02 | ||

|

|

12e3f10ad7 | ||

|

|

23d009d611 | ||

|

|

3edb00a648 | ||

|

|

88409cc1f0 | ||

|

|

cd6eee0b60 | ||

|

|

3ffce1e356 | ||

|

|

ce832bf13d | ||

|

|

5642dd3b66 | ||

|

|

a1747073df | ||

|

|

ad6c6bcd93 | ||

|

|

f4a04ce3c3 | ||

|

|

247e31079c | ||

|

|

a26893aabc | ||

|

|

ce759c02b1 | ||

|

|

9f6f9adc89 | ||

|

|

91025b9f24 | ||

|

|

a9bee3e638 |

7

.build/aria2.supervisor.conf

Normal file

7

.build/aria2.supervisor.conf

Normal file

@@ -0,0 +1,7 @@

|

||||

[supervisord]

|

||||

nodaemon=false

|

||||

|

||||

[program:background_process]

|

||||

command=aria2c --enable-rpc --save-session /cloudreve/data

|

||||

autostart=true

|

||||

autorestart=true

|

||||

15

.build/build-assets.sh

Executable file

15

.build/build-assets.sh

Executable file

@@ -0,0 +1,15 @@

|

||||

#!/bin/bash

|

||||

set -e

|

||||

export NODE_OPTIONS="--max-old-space-size=8192"

|

||||

|

||||

# This script is used to build the assets for the application.

|

||||

cd assets

|

||||

rm -rf build

|

||||

yarn install --network-timeout 1000000

|

||||

yarn version --new-version $1 --no-git-tag-version

|

||||

yarn run build

|

||||

|

||||

# Copy the build files to the application directory

|

||||

cd ../

|

||||

zip -r - assets/build >assets.zip

|

||||

mv assets.zip application/statics

|

||||

2

.build/entrypoint.sh

Executable file

2

.build/entrypoint.sh

Executable file

@@ -0,0 +1,2 @@

|

||||

supervisord -c ./aria2.supervisor.conf

|

||||

./cloudreve

|

||||

19

.github/DISCUSSION_TEMPLATE/general.yml

vendored

Normal file

19

.github/DISCUSSION_TEMPLATE/general.yml

vendored

Normal file

@@ -0,0 +1,19 @@

|

||||

title: "General Discussion"

|

||||

body:

|

||||

- type: checkboxes

|

||||

attributes:

|

||||

label: Self Checks

|

||||

description: "To make sure we get to you in time, please check the following :)"

|

||||

options:

|

||||

- label: I have searched for existing issues [search for existing issues](https://github.com/cloudreve/cloudreve/issues), including closed ones.

|

||||

required: true

|

||||

- label: I confirm that I am using English to submit this report, otherwise it will be closed. / 请使用英语提交,否则会被关闭。

|

||||

required: true

|

||||

- label: "Please do not modify this template :) and fill in all the required fields."

|

||||

required: true

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: Content

|

||||

placeholder: Please describe the content you would like to discuss.

|

||||

validations:

|

||||

required: true

|

||||

35

.github/DISCUSSION_TEMPLATE/ideas.yml

vendored

Normal file

35

.github/DISCUSSION_TEMPLATE/ideas.yml

vendored

Normal file

@@ -0,0 +1,35 @@

|

||||

title: Suggestions for New Features

|

||||

body:

|

||||

- type: checkboxes

|

||||

attributes:

|

||||

label: Self Checks

|

||||

description: "To make sure we get to you in time, please check the following :)"

|

||||

options:

|

||||

- label: I have searched for existing issues [search for existing issues](https://github.com/cloudreve/cloudreve/issues), including closed ones.

|

||||

required: true

|

||||

- label: I confirm that I am using English to submit this report, otherwise it will be closed. / 请使用英语提交,否则会被关闭。

|

||||

required: true

|

||||

- label: "Please do not modify this template :) and fill in all the required fields."

|

||||

required: true

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: 1. Is this request related to a challenge you're experiencing? Tell me about your story.

|

||||

placeholder: Please describe the specific scenario or problem you're facing as clearly as possible. For instance "I was trying to use [feature] for [specific task], and [what happened]... It was frustrating because...."

|

||||

validations:

|

||||

required: true

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: 2. Additional context or comments

|

||||

placeholder: (Any other information, comments, documentations, links, or screenshots that would provide more clarity. This is the place to add anything else not covered above.)

|

||||

validations:

|

||||

required: false

|

||||

- type: checkboxes

|

||||

attributes:

|

||||

label: 3. Can you help us with this feature?

|

||||

description: Let us know! This is not a commitment, but a starting point for collaboration.

|

||||

options:

|

||||

- label: I am interested in contributing to this feature.

|

||||

required: false

|

||||

- type: markdown

|

||||

attributes:

|

||||

value: Please limit one request per issue.

|

||||

28

.github/DISCUSSION_TEMPLATE/q-a.yml

vendored

Normal file

28

.github/DISCUSSION_TEMPLATE/q-a.yml

vendored

Normal file

@@ -0,0 +1,28 @@

|

||||

title: "Q&A"

|

||||

body:

|

||||

- type: checkboxes

|

||||

attributes:

|

||||

label: Self Checks

|

||||

description: "To make sure we get to you in time, please check the following :)"

|

||||

options:

|

||||

- label: I have searched for existing issues [search for existing issues](https://github.com/cloudreve/cloudreve/issues), including closed ones.

|

||||

required: true

|

||||

- label: I confirm that I am using English to submit this report, otherwise it will be closed. / 请使用英语提交,否则会被关闭。

|

||||

required: true

|

||||

- label: "Please do not modify this template :) and fill in all the required fields."

|

||||

required: true

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: 1. Is this request related to a challenge you're experiencing? Tell me about your story.

|

||||

placeholder: Please describe the specific scenario or problem you're facing as clearly as possible. For instance "I was trying to use [feature] for [specific task], and [what happened]... It was frustrating because...."

|

||||

validations:

|

||||

required: true

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: 2. Additional context or comments

|

||||

placeholder: (Any other information, comments, documentations, links, or screenshots that would provide more clarity. This is the place to add anything else not covered above.)

|

||||

validations:

|

||||

required: false

|

||||

- type: markdown

|

||||

attributes:

|

||||

value: Please limit one request per issue.

|

||||

38

.github/ISSUE_TEMPLATE/bug_report.md

vendored

38

.github/ISSUE_TEMPLATE/bug_report.md

vendored

@@ -1,38 +0,0 @@

|

||||

---

|

||||

name: Bug report

|

||||

about: Create a report to help us improve

|

||||

title: ''

|

||||

labels: ''

|

||||

assignees: ''

|

||||

|

||||

---

|

||||

|

||||

**Describe the bug**

|

||||

A clear and concise description of what the bug is.

|

||||

|

||||

**To Reproduce**

|

||||

Steps to reproduce the behavior:

|

||||

1. Go to '...'

|

||||

2. Click on '....'

|

||||

3. Scroll down to '....'

|

||||

4. See error

|

||||

|

||||

**Expected behavior**

|

||||

A clear and concise description of what you expected to happen.

|

||||

|

||||

**Screenshots**

|

||||

If applicable, add screenshots to help explain your problem.

|

||||

|

||||

**Desktop (please complete the following information):**

|

||||

- OS: [e.g. iOS]

|

||||

- Browser [e.g. chrome, safari]

|

||||

- Version [e.g. 22]

|

||||

|

||||

**Smartphone (please complete the following information):**

|

||||

- Device: [e.g. iPhone6]

|

||||

- OS: [e.g. iOS8.1]

|

||||

- Browser [e.g. stock browser, safari]

|

||||

- Version [e.g. 22]

|

||||

|

||||

**Additional context**

|

||||

Add any other context about the problem here.

|

||||

91

.github/ISSUE_TEMPLATE/bug_report.yml

vendored

Normal file

91

.github/ISSUE_TEMPLATE/bug_report.yml

vendored

Normal file

@@ -0,0 +1,91 @@

|

||||

name: "🕷️ Bug report"

|

||||

description: Report errors or unexpected behavior

|

||||

labels:

|

||||

- bug

|

||||

body:

|

||||

- type: checkboxes

|

||||

attributes:

|

||||

label: Self Checks

|

||||

description: "To make sure we get to you in time, please check the following :)"

|

||||

options:

|

||||

- label: I have read the [Contributing Guide](https://docs.cloudreve.org/api/contributing) and [Language Policy](https://github.com/cloudreve/cloudreve/discussions/3335).

|

||||

required: true

|

||||

- label: This is only for bug report, if you would like to ask a question, please head to [Discussions](https://github.com/cloudreve/cloudreve/discussions).

|

||||

required: true

|

||||

- label: I have searched for existing issues [search for existing issues](https://github.com/cloudreve/cloudreve/issues), including closed ones.

|

||||

required: true

|

||||

- label: I confirm that I am using English to submit this report, otherwise it will be closed. / 请使用英语提交,否则会被关闭。

|

||||

required: true

|

||||

- label: "Please do not modify this template :) and fill in all the required fields."

|

||||

required: true

|

||||

|

||||

- type: input

|

||||

attributes:

|

||||

label: Cloudreve version

|

||||

description: e.g. 4.14.0

|

||||

validations:

|

||||

required: true

|

||||

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Pro or Community Edition

|

||||

description: What version of Cloudreve are you using?

|

||||

multiple: true

|

||||

options:

|

||||

- Pro

|

||||

- Community (Open Source)

|

||||

validations:

|

||||

required: true

|

||||

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Database type

|

||||

description: What database are you using?

|

||||

multiple: true

|

||||

options:

|

||||

- MySQL

|

||||

- PostgreSQL

|

||||

- SQLite

|

||||

- I don't know

|

||||

validations:

|

||||

required: true

|

||||

|

||||

- type: input

|

||||

attributes:

|

||||

label: Browser and operating system

|

||||

description: What browser and operating system are you using?

|

||||

placeholder: E.g. Chrome 123.0.0 on macOS 14.0.0

|

||||

validations:

|

||||

required: false

|

||||

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: Steps to reproduce

|

||||

description: We highly suggest including screenshots and a bug report log. Please use the right markdown syntax for code blocks.

|

||||

placeholder: Having detailed steps helps us reproduce the bug. If you have logs, please use fenced code blocks (triple backticks ```) to format them.

|

||||

validations:

|

||||

required: true

|

||||

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: ✔️ Expected Behavior

|

||||

description: Describe what you expected to happen.

|

||||

placeholder: What were you expecting? Please do not copy and paste the steps to reproduce here.

|

||||

validations:

|

||||

required: true

|

||||

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: ❌ Actual Behavior

|

||||

description: Describe what actually happened.

|

||||

placeholder: What happened instead? Please do not copy and paste the steps to reproduce here.

|

||||

validations:

|

||||

required: false

|

||||

|

||||

- type: input

|

||||

attributes:

|

||||

label: Addition context information

|

||||

description: Provide any additional context information that might be helpful.

|

||||

placeholder: Any additional information that might be helpful.

|

||||

validations:

|

||||

required: false

|

||||

14

.github/ISSUE_TEMPLATE/config.yml

vendored

Normal file

14

.github/ISSUE_TEMPLATE/config.yml

vendored

Normal file

@@ -0,0 +1,14 @@

|

||||

blank_issues_enabled: false

|

||||

contact_links:

|

||||

- name: "\U0001F4F1 iOS App related issues"

|

||||

url: "https://github.com/cloudreve/ios-feedback/issues/new"

|

||||

about: Report issues related to the official iOS/iPadOS client.

|

||||

- name: "\U0001F5A5 Desktop client related issues"

|

||||

url: "https://github.com/cloudreve/desktop/issues/new"

|

||||

about: Report issues related to the official desktop client.

|

||||

- name: "\U0001F4AC Documentation Issues"

|

||||

url: "https://github.com/cloudreve/docs/issues/new"

|

||||

about: Report issues with the documentation, such as typos, outdated information, or missing content. Please provide the specific section and details of the issue.

|

||||

- name: "\U0001F4E7 Discussions"

|

||||

url: https://github.com/cloudreve/cloudreve/discussions

|

||||

about: General discussions and seek help from the community

|

||||

20

.github/ISSUE_TEMPLATE/feature_request.md

vendored

20

.github/ISSUE_TEMPLATE/feature_request.md

vendored

@@ -1,20 +0,0 @@

|

||||

---

|

||||

name: Feature request

|

||||

about: Suggest an idea for this project

|

||||

title: ''

|

||||

labels: ''

|

||||

assignees: ''

|

||||

|

||||

---

|

||||

|

||||

**Is your feature request related to a problem? Please describe.**

|

||||

A clear and concise description of what the problem is. Ex. I'm always frustrated when [...]

|

||||

|

||||

**Describe the solution you'd like**

|

||||

A clear and concise description of what you want to happen.

|

||||

|

||||

**Describe alternatives you've considered**

|

||||

A clear and concise description of any alternative solutions or features you've considered.

|

||||

|

||||

**Additional context**

|

||||

Add any other context or screenshots about the feature request here.

|

||||

40

.github/ISSUE_TEMPLATE/feature_request.yml

vendored

Normal file

40

.github/ISSUE_TEMPLATE/feature_request.yml

vendored

Normal file

@@ -0,0 +1,40 @@

|

||||

name: "⭐ Feature or enhancement request"

|

||||

description: Propose something new.

|

||||

labels:

|

||||

- enhancement

|

||||

body:

|

||||

- type: checkboxes

|

||||

attributes:

|

||||

label: Self Checks

|

||||

description: "To make sure we get to you in time, please check the following :)"

|

||||

options:

|

||||

- label: I have read the [Contributing Guide](https://docs.cloudreve.org/api/contributing) and [Language Policy](https://github.com/cloudreve/cloudreve/discussions/3335).

|

||||

required: true

|

||||

- label: I have searched for existing issues [search for existing issues](https://github.com/cloudreve/cloudreve/issues), including closed ones.

|

||||

required: true

|

||||

- label: I confirm that I am using English to submit this report, otherwise it will be closed. / 请使用英语提交,否则会被关闭。

|

||||

required: true

|

||||

- label: "Please do not modify this template :) and fill in all the required fields."

|

||||

required: true

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: 1. Is this request related to a challenge you're experiencing? Tell me about your story.

|

||||

placeholder: Please describe the specific scenario or problem you're facing as clearly as possible. For instance "I was trying to use [feature] for [specific task], and [what happened]... It was frustrating because...."

|

||||

validations:

|

||||

required: true

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: 2. Additional context or comments

|

||||

placeholder: (Any other information, comments, documentations, links, or screenshots that would provide more clarity. This is the place to add anything else not covered above.)

|

||||

validations:

|

||||

required: false

|

||||

- type: checkboxes

|

||||

attributes:

|

||||

label: 3. Can you help us with this feature?

|

||||

description: Let us know! This is not a commitment, but a starting point for collaboration.

|

||||

options:

|

||||

- label: I am interested in contributing to this feature.

|

||||

required: false

|

||||

- type: markdown

|

||||

attributes:

|

||||

value: Please limit one request per issue.

|

||||

31

.github/workflows/build.yml

vendored

31

.github/workflows/build.yml

vendored

@@ -1,31 +0,0 @@

|

||||

name: Build

|

||||

|

||||

on: workflow_dispatch

|

||||

|

||||

jobs:

|

||||

build:

|

||||

name: Build

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- name: Set up Go 1.20

|

||||

uses: actions/setup-go@v2

|

||||

with:

|

||||

go-version: "1.20"

|

||||

id: go

|

||||

|

||||

- name: Check out code into the Go module directory

|

||||

uses: actions/checkout@v2

|

||||

with:

|

||||

clean: false

|

||||

submodules: "recursive"

|

||||

- run: |

|

||||

git fetch --prune --unshallow --tags

|

||||

|

||||

- name: Build and Release

|

||||

uses: goreleaser/goreleaser-action@v4

|

||||

with:

|

||||

distribution: goreleaser

|

||||

version: latest

|

||||

args: release --clean --skip-validate

|

||||

env:

|

||||

GITHUB_TOKEN: ${{ secrets.RELEASE_TOKEN }}

|

||||

57

.github/workflows/docker-release.yml

vendored

57

.github/workflows/docker-release.yml

vendored

@@ -1,57 +0,0 @@

|

||||

name: Build and push docker image

|

||||

|

||||

on:

|

||||

push:

|

||||

tags:

|

||||

- 3.* # triggered on every push with tag 3.*

|

||||

workflow_dispatch: # or just on button clicked

|

||||

|

||||

jobs:

|

||||

docker-build:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- name: Checkout

|

||||

uses: actions/checkout@v2

|

||||

- run: git fetch --prune --unshallow

|

||||

- name: Setup Environments

|

||||

id: envs

|

||||

run: |

|

||||

CLOUDREVE_LATEST_TAG=$(git describe --tags --abbrev=0)

|

||||

DOCKER_IMAGE="cloudreve/cloudreve"

|

||||

|

||||

echo "RELEASE_VERSION=${GITHUB_REF#refs}"

|

||||

TAGS="${DOCKER_IMAGE}:latest,${DOCKER_IMAGE}:${CLOUDREVE_LATEST_TAG}"

|

||||

|

||||

echo "CLOUDREVE_LATEST_TAG:${CLOUDREVE_LATEST_TAG}"

|

||||

echo ::set-output name=tags::${TAGS}

|

||||

- name: Setup QEMU Emulator

|

||||

uses: docker/setup-qemu-action@master

|

||||

with:

|

||||

platforms: all

|

||||

- name: Setup Docker Buildx Command

|

||||

id: buildx

|

||||

uses: docker/setup-buildx-action@master

|

||||

- name: Login to Dockerhub

|

||||

uses: docker/login-action@v1

|

||||

with:

|

||||

username: ${{ secrets.DOCKERHUB_USERNAME }}

|

||||

password: ${{ secrets.DOCKERHUB_PASSWORD }}

|

||||

- name: Build Docker Image and Push

|

||||

id: docker_build

|

||||

uses: docker/build-push-action@v2

|

||||

with:

|

||||

push: true

|

||||

builder: ${{ steps.buildx.outputs.name }}

|

||||

context: .

|

||||

file: ./Dockerfile

|

||||

platforms: linux/amd64,linux/arm64,linux/arm/v7

|

||||

tags: ${{ steps.envs.outputs.tags }}

|

||||

- name: Update Docker Hub Description

|

||||

uses: peter-evans/dockerhub-description@v3

|

||||

with:

|

||||

username: ${{ secrets.DOCKERHUB_USERNAME }}

|

||||

password: ${{ secrets.DOCKERHUB_PASSWORD }}

|

||||

repository: cloudreve/cloudreve

|

||||

short-description: ${{ github.event.repository.description }}

|

||||

- name: Image Digest

|

||||

run: echo ${{ steps.docker_build.outputs.digest }}

|

||||

35

.github/workflows/test.yml

vendored

35

.github/workflows/test.yml

vendored

@@ -1,35 +0,0 @@

|

||||

name: Test

|

||||

|

||||

on:

|

||||

pull_request:

|

||||

branches:

|

||||

- master

|

||||

push:

|

||||

branches: [master]

|

||||

|

||||

jobs:

|

||||

test:

|

||||

name: Test

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- name: Set up Go 1.20

|

||||

uses: actions/setup-go@v2

|

||||

with:

|

||||

go-version: "1.20"

|

||||

id: go

|

||||

|

||||

- name: Check out code into the Go module directory

|

||||

uses: actions/checkout@v2

|

||||

with:

|

||||

submodules: "recursive"

|

||||

|

||||

- name: Build static files

|

||||

run: |

|

||||

mkdir assets/build

|

||||

touch assets/build/test.html

|

||||

|

||||

- name: Test

|

||||

run: go test -coverprofile=coverage.txt -covermode=atomic ./...

|

||||

|

||||

- name: Upload coverage reports to Codecov

|

||||

uses: codecov/codecov-action@v2

|

||||

7

.gitignore

vendored

7

.gitignore

vendored

@@ -1,5 +1,4 @@

|

||||

# Binaries for programs and plugins

|

||||

cloudreve

|

||||

*.exe

|

||||

*.exe~

|

||||

*.dll

|

||||

@@ -8,7 +7,7 @@ cloudreve

|

||||

*.db

|

||||

*.bin

|

||||

/release/

|

||||

assets.zip

|

||||

application/statics/assets.zip

|

||||

|

||||

# Test binary, build with `go test -c`

|

||||

*.test

|

||||

@@ -31,3 +30,7 @@ conf/conf.ini

|

||||

.vscode/

|

||||

|

||||

dist/

|

||||

data/

|

||||

tmp/

|

||||

.devcontainer/

|

||||

cloudreve

|

||||

|

||||

@@ -1,29 +1,32 @@

|

||||

env:

|

||||

- CI=false

|

||||

- GENERATE_SOURCEMAP=false

|

||||

version: 2

|

||||

|

||||

before:

|

||||

hooks:

|

||||

- go mod tidy

|

||||

- sh -c "cd assets && rm -rf build && yarn install --network-timeout 1000000 && yarn run build && cd ../ && zip -r - assets/build >assets.zip"

|

||||

- chmod +x ./.build/build-assets.sh

|

||||

- ./.build/build-assets.sh {{.Version}}

|

||||

|

||||

builds:

|

||||

-

|

||||

env:

|

||||

- env:

|

||||

- CGO_ENABLED=0

|

||||

|

||||

binary: cloudreve

|

||||

|

||||

ldflags:

|

||||

- -X 'github.com/cloudreve/Cloudreve/v3/pkg/conf.BackendVersion={{.Tag}}' -X 'github.com/cloudreve/Cloudreve/v3/pkg/conf.LastCommit={{.ShortCommit}}'

|

||||

- -s -w

|

||||

- -X 'github.com/cloudreve/Cloudreve/v4/application/constants.BackendVersion={{.Tag}}' -X 'github.com/cloudreve/Cloudreve/v4/application/constants.LastCommit={{.ShortCommit}}'

|

||||

|

||||

goos:

|

||||

- linux

|

||||

- windows

|

||||

- darwin

|

||||

- freebsd

|

||||

|

||||

goarch:

|

||||

- amd64

|

||||

- arm

|

||||

- arm64

|

||||

- loong64

|

||||

|

||||

goarm:

|

||||

- 5

|

||||

@@ -37,85 +40,79 @@ builds:

|

||||

goarm: 6

|

||||

- goos: windows

|

||||

goarm: 7

|

||||

- goos: windows

|

||||

goarch: loong64

|

||||

- goos: freebsd

|

||||

goarch: loong64

|

||||

- goos: freebsd

|

||||

goarch: arm

|

||||

|

||||

archives:

|

||||

- format: tar.gz

|

||||

- formats: ["tar.gz"]

|

||||

# this name template makes the OS and Arch compatible with the results of uname.

|

||||

name_template: >-

|

||||

cloudreve_{{.Tag}}_{{- .Os }}_{{ .Arch }}

|

||||

{{- if .Arm }}v{{ .Arm }}{{ end }}

|

||||

# use zip for windows archives

|

||||

format_overrides:

|

||||

- goos: windows

|

||||

format: zip

|

||||

- goos: windows

|

||||

formats: ["zip"]

|

||||

|

||||

checksum:

|

||||

name_template: 'checksums.txt'

|

||||

name_template: "checksums.txt"

|

||||

snapshot:

|

||||

name_template: "{{ incpatch .Version }}-next"

|

||||

version_template: "{{ incpatch .Version }}-next"

|

||||

|

||||

changelog:

|

||||

sort: asc

|

||||

filters:

|

||||

exclude:

|

||||

- '^docs:'

|

||||

- '^test:'

|

||||

- "^docs:"

|

||||

- "^test:"

|

||||

|

||||

release:

|

||||

draft: true

|

||||

prerelease: auto

|

||||

target_commitish: '{{ .Commit }}'

|

||||

target_commitish: "{{ .Commit }}"

|

||||

name_template: "{{.Version}}"

|

||||

|

||||

dockers:

|

||||

-

|

||||

dockerfile: Dockerfile

|

||||

- dockerfile: Dockerfile

|

||||

use: buildx

|

||||

build_flag_templates:

|

||||

- "--platform=linux/amd64"

|

||||

- "--provenance=false"

|

||||

goos: linux

|

||||

goarch: amd64

|

||||

goamd64: v1

|

||||

extra_files:

|

||||

- .build/aria2.supervisor.conf

|

||||

- .build/entrypoint.sh

|

||||

image_templates:

|

||||

- "cloudreve/cloudreve:{{ .Tag }}-amd64"

|

||||

-

|

||||

dockerfile: Dockerfile

|

||||

- dockerfile: Dockerfile

|

||||

use: buildx

|

||||

build_flag_templates:

|

||||

- "--platform=linux/arm64"

|

||||

- "--provenance=false"

|

||||

goos: linux

|

||||

goarch: arm64

|

||||

extra_files:

|

||||

- .build/aria2.supervisor.conf

|

||||

- .build/entrypoint.sh

|

||||

image_templates:

|

||||

- "cloudreve/cloudreve:{{ .Tag }}-arm64"

|

||||

-

|

||||

dockerfile: Dockerfile

|

||||

use: buildx

|

||||

build_flag_templates:

|

||||

- "--platform=linux/arm/v6"

|

||||

goos: linux

|

||||

goarch: arm

|

||||

goarm: '6'

|

||||

image_templates:

|

||||

- "cloudreve/cloudreve:{{ .Tag }}-armv6"

|

||||

-

|

||||

dockerfile: Dockerfile

|

||||

use: buildx

|

||||

build_flag_templates:

|

||||

- "--platform=linux/arm/v7"

|

||||

goos: linux

|

||||

goarch: arm

|

||||

goarm: '7'

|

||||

image_templates:

|

||||

- "cloudreve/cloudreve:{{ .Tag }}-armv7"

|

||||

|

||||

docker_manifests:

|

||||

- name_template: "cloudreve/cloudreve:latest"

|

||||

image_templates:

|

||||

- "cloudreve/cloudreve:{{ .Tag }}-amd64"

|

||||

- "cloudreve/cloudreve:{{ .Tag }}-arm64"

|

||||

- "cloudreve/cloudreve:{{ .Tag }}-armv6"

|

||||

- "cloudreve/cloudreve:{{ .Tag }}-armv7"

|

||||

- name_template: "cloudreve/cloudreve:v4"

|

||||

image_templates:

|

||||

- "cloudreve/cloudreve:{{ .Tag }}-amd64"

|

||||

- "cloudreve/cloudreve:{{ .Tag }}-arm64"

|

||||

- name_template: "cloudreve/cloudreve:{{ .Tag }}"

|

||||

image_templates:

|

||||

- "cloudreve/cloudreve:{{ .Tag }}-amd64"

|

||||

- "cloudreve/cloudreve:{{ .Tag }}-arm64"

|

||||

- "cloudreve/cloudreve:{{ .Tag }}-armv6"

|

||||

- "cloudreve/cloudreve:{{ .Tag }}-armv7"

|

||||

29

Dockerfile

29

Dockerfile

@@ -1,17 +1,30 @@

|

||||

FROM alpine:latest

|

||||

|

||||

WORKDIR /cloudreve

|

||||

COPY cloudreve ./cloudreve

|

||||

|

||||

RUN apk update \

|

||||

&& apk add --no-cache tzdata \

|

||||

&& apk add --no-cache tzdata vips-tools ffmpeg libreoffice aria2 supervisor font-noto font-noto-cjk libheif libraw-tools\

|

||||

&& cp /usr/share/zoneinfo/Asia/Shanghai /etc/localtime \

|

||||

&& echo "Asia/Shanghai" > /etc/timezone \

|

||||

&& chmod +x ./cloudreve \

|

||||

&& mkdir -p /data/aria2 \

|

||||

&& chmod -R 766 /data/aria2

|

||||

&& mkdir -p ./data/temp/aria2 \

|

||||

&& chmod -R 766 ./data/temp/aria2

|

||||

|

||||

EXPOSE 5212

|

||||

VOLUME ["/cloudreve/uploads", "/cloudreve/avatar", "/data"]

|

||||

ENV CR_ENABLE_ARIA2=1 \

|

||||

CR_SETTING_DEFAULT_thumb_ffmpeg_enabled=1 \

|

||||

CR_SETTING_DEFAULT_thumb_vips_enabled=1 \

|

||||

CR_SETTING_DEFAULT_thumb_libreoffice_enabled=1 \

|

||||

CR_SETTING_DEFAULT_media_meta_ffprobe=1 \

|

||||

CR_SETTING_DEFAULT_thumb_libraw_enabled=1

|

||||

|

||||

COPY .build/aria2.supervisor.conf .build/entrypoint.sh ./

|

||||

COPY cloudreve ./cloudreve

|

||||

|

||||

RUN chmod +x ./cloudreve \

|

||||

&& chmod +x ./entrypoint.sh

|

||||

|

||||

EXPOSE 5212 443

|

||||

|

||||

VOLUME ["/cloudreve/data"]

|

||||

|

||||

ENTRYPOINT ["sh", "./entrypoint.sh"]

|

||||

|

||||

ENTRYPOINT ["./cloudreve"]

|

||||

|

||||

100

README.md

100

README.md

@@ -1,4 +1,4 @@

|

||||

[中文版本](https://github.com/cloudreve/Cloudreve/blob/master/README_zh-CN.md)

|

||||

[中文版本](https://github.com/cloudreve/cloudreve/blob/master/README_zh-CN.md)

|

||||

|

||||

<h1 align="center">

|

||||

<br>

|

||||

@@ -7,97 +7,69 @@

|

||||

Cloudreve

|

||||

<br>

|

||||

</h1>

|

||||

<h4 align="center">Self-hosted file management system with muilt-cloud support.</h4>

|

||||

<h4 align="center">Self-hosted file management system with multi-cloud support.</h4>

|

||||

|

||||

<p align="center">

|

||||

<a href="https://github.com/cloudreve/Cloudreve/actions/workflows/test.yml">

|

||||

<img src="https://img.shields.io/github/actions/workflow/status/cloudreve/Cloudreve/test.yml?branch=master&style=flat-square"

|

||||

alt="GitHub Test Workflow">

|

||||

<a href="https://dev.azure.com/abslantliu/cloudreve/_build?definitionId=6">

|

||||

<img src="https://img.shields.io/github/check-runs/cloudreve/cloudreve/master"

|

||||

alt="Azure pipelines">

|

||||

</a>

|

||||

<a href="https://codecov.io/gh/cloudreve/Cloudreve"><img src="https://img.shields.io/codecov/c/github/cloudreve/Cloudreve?style=flat-square"></a>

|

||||

<a href="https://goreportcard.com/report/github.com/cloudreve/Cloudreve">

|

||||

<img src="https://goreportcard.com/badge/github.com/cloudreve/Cloudreve?style=flat-square">

|

||||

<a href="https://github.com/cloudreve/cloudreve/releases">

|

||||

<img src="https://img.shields.io/github/v/release/cloudreve/cloudreve?include_prereleases" />

|

||||

</a>

|

||||

<a href="https://github.com/cloudreve/Cloudreve/releases">

|

||||

<img src="https://img.shields.io/github/v/release/cloudreve/Cloudreve?include_prereleases&style=flat-square" />

|

||||

<a href="https://github.com/cloudreve/cloudreve/releases">

|

||||

<img src="https://badgen.net/static/release%20size/34%20MB/blue"/>

|

||||

</a>

|

||||

<a href="https://hub.docker.com/r/cloudreve/cloudreve">

|

||||

<img src="https://img.shields.io/docker/image-size/cloudreve/cloudreve?style=flat-square"/>

|

||||

<img alt="Docker Pulls" src="https://img.shields.io/docker/pulls/cloudreve/cloudreve" />

|

||||

</a>

|

||||

</p>

|

||||

<p align="center">

|

||||

<a href="https://cloudreve.org">Homepage</a> •

|

||||

<a href="https://demo.cloudreve.org">Demo</a> •

|

||||

<a href="https://forum.cloudreve.org/">Discussion</a> •

|

||||

<a href="https://docs.cloudreve.org/v/en/">Documents</a> •

|

||||

<a href="https://github.com/cloudreve/Cloudreve/releases">Download</a> •

|

||||

<a href="https://t.me/cloudreve_official">Telegram Group</a> •

|

||||

<a href="#scroll-License">License</a>

|

||||

<a href="https://demo.cloudreve.org">Try it</a> •

|

||||

<a href="https://github.com/cloudreve/cloudreve/discussions">Discussion</a> •

|

||||

<a href="https://docs.cloudreve.org">Documents</a> •

|

||||

<a href="https://github.com/cloudreve/cloudreve/releases">Download</a> •

|

||||

<a href="https://t.me/cloudreve_official">Telegram</a> •

|

||||

<a href="https://discord.com/invite/WTpMFpZT76">Discord</a>

|

||||

</p>

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

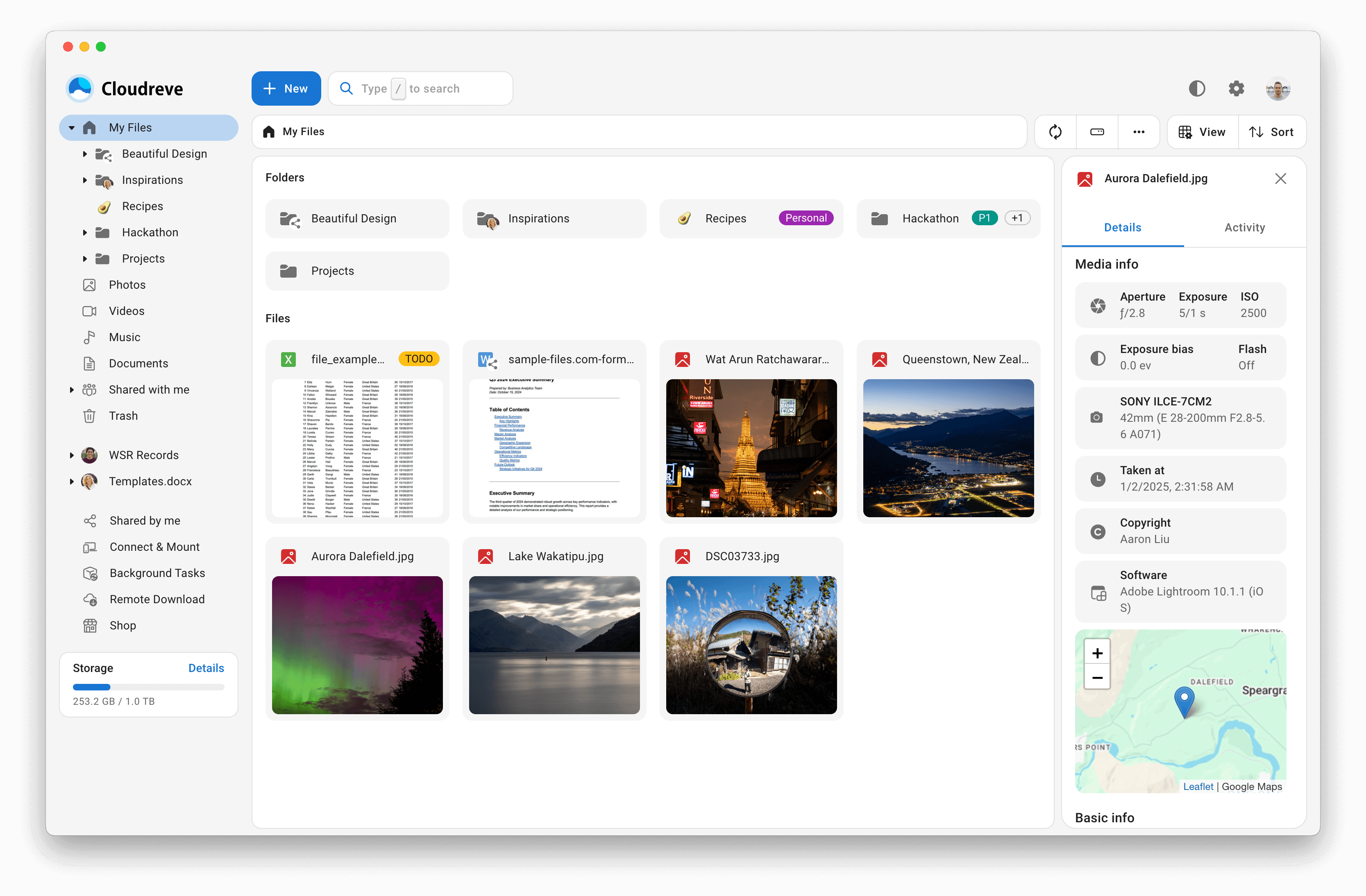

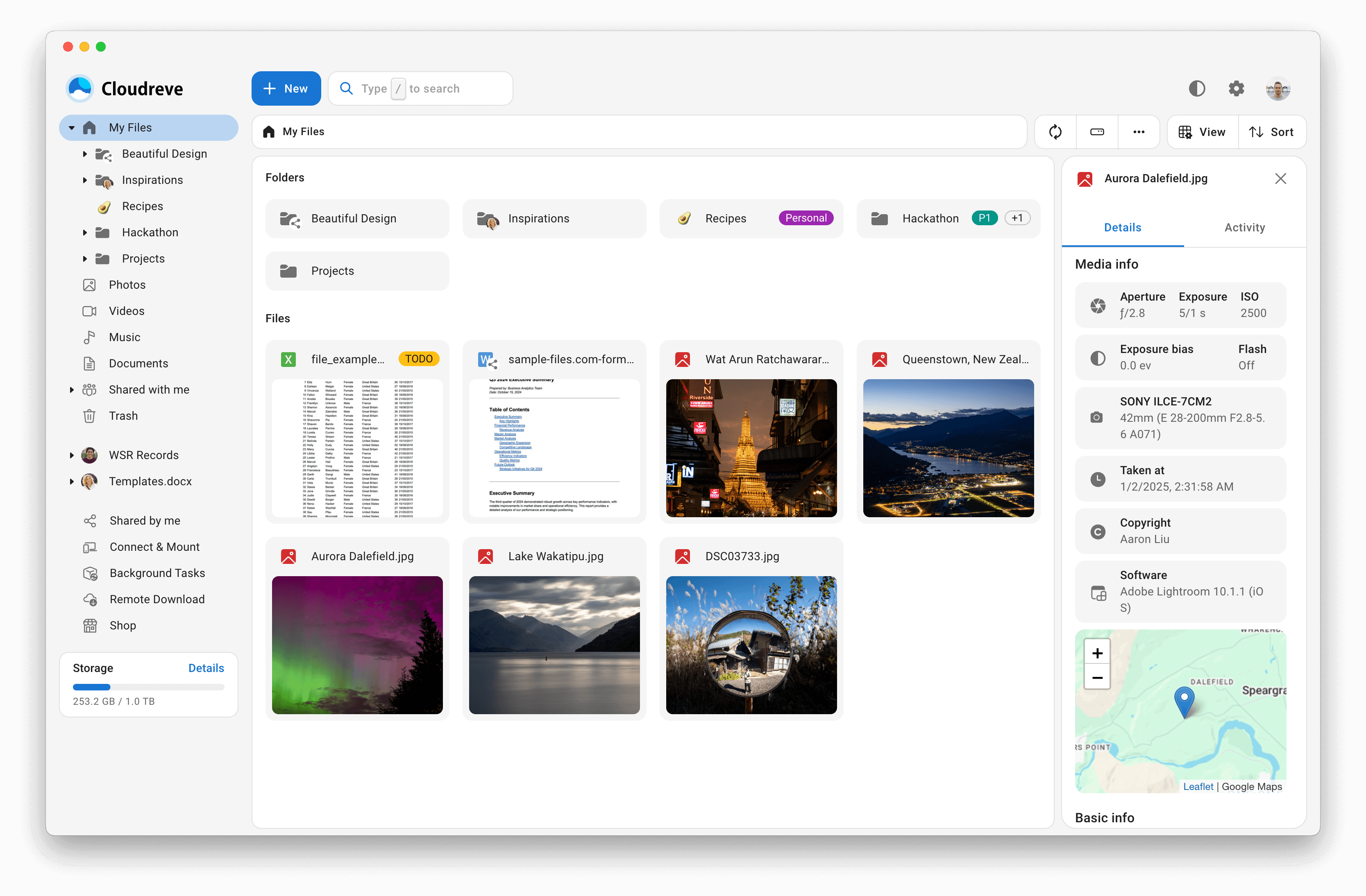

## :sparkles: Features

|

||||

|

||||

* :cloud: Support storing files into Local storage, Remote storage, Qiniu, Aliyun OSS, Tencent COS, Upyun, OneDrive, S3 compatible API.

|

||||

* :outbox_tray: Upload/Download in directly transmission with speed limiting support.

|

||||

* 💾 Integrate with Aria2 to download files offline, use multiple download nodes to share the load.

|

||||

* 📚 Compress/Extract files, download files in batch.

|

||||

* 💻 WebDAV support covering all storage providers.

|

||||

* :zap:Drag&Drop to upload files or folders, with streaming upload processing.

|

||||

* :card_file_box: Drag & Drop to manage your files.

|

||||

* :family_woman_girl_boy: Multi-users with multi-groups.

|

||||

* :link: Create share links for files and folders with expiration date.

|

||||

* :eye_speech_bubble: Preview videos, images, audios, ePub files online; edit texts, Office documents online.

|

||||

* :art: Customize theme colors, dark mode, PWA application, SPA, i18n.

|

||||

* :rocket: All-In-One packing, with all features out-of-the-box.

|

||||

* 🌈 ... ...

|

||||

- :cloud: Support storing files into Local, Remote node, OneDrive, S3 compatible API, Qiniu Kodo, Aliyun OSS, Tencent COS, Huawei Cloud OBS, Kingsoft Cloud KS3, Upyun.

|

||||

- :outbox_tray: Upload/Download in directly transmission from client to storage providers.

|

||||

- 💾 Integrate with Aria2/qBittorrent to download files in background, use multiple download nodes to share the load.

|

||||

- 📚 Compress/Extract/Preview archived files, download files in batch.

|

||||

- 💻 WebDAV support covering all storage providers.

|

||||

- :zap:Drag&Drop to upload files or folders, with parallel resumable upload support.

|

||||

- :card_file_box: Extract media metadata from files, search files by metadata or tags.

|

||||

- :family_woman_girl_boy: Multi-users with multi-groups.

|

||||

- :link: Create share links for files and folders with expiration date.

|

||||

- :eye_speech_bubble: Preview videos, images, audios, ePub files online; edit texts, diagrams, Markdown, images, Office documents online.

|

||||

- :art: Customize theme colors, dark mode, PWA application, SPA, i18n.

|

||||

- :rocket: All-in-one packaging, with all features out of the box.

|

||||

- 🌈 ... ...

|

||||

|

||||

## :hammer_and_wrench: Deploy

|

||||

|

||||

Download the main binary for your target machine OS, CPU architecture and run it directly.

|

||||

To deploy Cloudreve, you can refer to [Getting started](https://docs.cloudreve.org/overview/quickstart) for a quick local deployment to test.

|

||||

|

||||

```shell

|

||||

# Extract Cloudreve binary

|

||||

tar -zxvf cloudreve_VERSION_OS_ARCH.tar.gz

|

||||

|

||||

# Grant execute permission

|

||||

chmod +x ./cloudreve

|

||||

|

||||

# Start Cloudreve

|

||||

./cloudreve

|

||||

```

|

||||

|

||||

The above is a minimum deploy example, you can refer to [Getting started](https://docs.cloudreve.org/v/en/getting-started/install) for a completed deployment.

|

||||

When you're ready to deploy Cloudreve to a production environment, you can refer to [Deploy](https://docs.cloudreve.org/overview/deploy/) for a complete deployment.

|

||||

|

||||

## :gear: Build

|

||||

|

||||

You need to have `Go >= 1.18`, `node.js`, `yarn`, `zip`, [goreleaser](https://goreleaser.com/intro/) and other necessary dependencies before you can build it yourself.

|

||||

Please refer to [Build](https://docs.cloudreve.org/overview/build/) for how to build Cloudreve from source code.

|

||||

|

||||

#### Install goreleaser

|

||||

## :rocket: Contributing

|

||||

|

||||

```shell

|

||||

go install github.com/goreleaser/goreleaser@latest

|

||||

```

|

||||

|

||||

#### Clone the code

|

||||

|

||||

```shell

|

||||

git clone --recurse-submodules https://github.com/cloudreve/Cloudreve.git

|

||||

```

|

||||

|

||||

#### Compile

|

||||

|

||||

```shell

|

||||

goreleaser build --clean --single-target --snapshot

|

||||

```

|

||||

If you're interested in contributing to Cloudreve, please refer to [Contributing](https://docs.cloudreve.org/api/contributing/) for how to contribute to Cloudreve.

|

||||

|

||||

## :alembic: Stacks

|

||||

|

||||

* [Go](https://golang.org/) + [Gin](https://github.com/gin-gonic/gin)

|

||||

* [React](https://github.com/facebook/react) + [Redux](https://github.com/reduxjs/redux) + [Material-UI](https://github.com/mui-org/material-ui)

|

||||

- [Go](https://golang.org/) + [Gin](https://github.com/gin-gonic/gin) + [ent](https://github.com/ent/ent)

|

||||

- [React](https://github.com/facebook/react) + [Redux](https://github.com/reduxjs/redux) + [Material-UI](https://github.com/mui-org/material-ui)

|

||||

|

||||

## :scroll: License

|

||||

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

[English Version](https://github.com/cloudreve/Cloudreve/blob/master/README.md)

|

||||

[English Version](https://github.com/cloudreve/cloudreve/blob/master/README.md)

|

||||

|

||||

<h1 align="center">

|

||||

<br>

|

||||

@@ -11,93 +11,66 @@

|

||||

<h4 align="center">支持多家云存储驱动的公有云文件系统.</h4>

|

||||

|

||||

<p align="center">

|

||||

<a href="https://github.com/cloudreve/Cloudreve/actions/workflows/test.yml">

|

||||

<img src="https://img.shields.io/github/actions/workflow/status/cloudreve/Cloudreve/test.yml?branch=master&style=flat-square"

|

||||

alt="GitHub Test Workflow">

|

||||

<a href="https://dev.azure.com/abslantliu/cloudreve/_build?definitionId=6">

|

||||

<img src="https://img.shields.io/github/check-runs/cloudreve/cloudreve/master"

|

||||

alt="Azure pipelines">

|

||||

</a>

|

||||

<a href="https://codecov.io/gh/cloudreve/Cloudreve"><img src="https://img.shields.io/codecov/c/github/cloudreve/Cloudreve?style=flat-square"></a>

|

||||

<a href="https://goreportcard.com/report/github.com/cloudreve/Cloudreve">

|

||||

<img src="https://goreportcard.com/badge/github.com/cloudreve/Cloudreve?style=flat-square">

|

||||

<a href="https://github.com/cloudreve/cloudreve/releases">

|

||||

<img src="https://img.shields.io/github/v/release/cloudreve/cloudreve?include_prereleases" />

|

||||

</a>

|

||||

<a href="https://github.com/cloudreve/Cloudreve/releases">

|

||||

<img src="https://img.shields.io/github/v/release/cloudreve/Cloudreve?include_prereleases&style=flat-square" />

|

||||

<a href="https://github.com/cloudreve/cloudreve/releases">

|

||||

<img src="https://badgen.net/static/release%20size/34%20MB/blue"/>

|

||||

</a>

|

||||

<a href="https://hub.docker.com/r/cloudreve/cloudreve">

|

||||

<img src="https://img.shields.io/docker/image-size/cloudreve/cloudreve?style=flat-square"/>

|

||||

<img alt="Docker Pulls" src="https://img.shields.io/docker/pulls/cloudreve/cloudreve" />

|

||||

</a>

|

||||

</p>

|

||||

<p align="center">

|

||||

<a href="https://cloudreve.org">主页</a> •

|

||||

<a href="https://demo.cloudreve.org">演示站</a> •

|

||||

<a href="https://forum.cloudreve.org/">讨论社区</a> •

|

||||

<a href="https://docs.cloudreve.org/">文档</a> •

|

||||

<a href="https://github.com/cloudreve/Cloudreve/releases">下载</a> •

|

||||

<a href="https://t.me/cloudreve_official">Telegram 群组</a> •

|

||||

<a href="#scroll-许可证">许可证</a>

|

||||

<a href="https://demo.cloudreve.org">演示</a> •

|

||||

<a href="https://github.com/cloudreve/cloudreve/discussions">讨论</a> •

|

||||

<a href="https://docs.cloudreve.org">文档</a> •

|

||||

<a href="https://github.com/cloudreve/cloudreve/releases">下载</a> •

|

||||

<a href="https://t.me/cloudreve_official">Telegram</a> •

|

||||

<a href="https://discord.com/invite/WTpMFpZT76">Discord</a>

|

||||

</p>

|

||||

|

||||

|

||||

|

||||

|

||||

## :sparkles: 特性

|

||||

|

||||

* :cloud: 支持本机、从机、七牛、阿里云 OSS、腾讯云 COS、又拍云、OneDrive (包括世纪互联版) 、S3兼容协议 作为存储端

|

||||

* :outbox_tray: 上传/下载 支持客户端直传,支持下载限速

|

||||

* 💾 可对接 Aria2 离线下载,可使用多个从机节点分担下载任务

|

||||

* 📚 在线 压缩/解压缩、多文件打包下载

|

||||

* 💻 覆盖全部存储策略的 WebDAV 协议支持

|

||||

* :zap: 拖拽上传、目录上传、流式上传处理

|

||||

* :card_file_box: 文件拖拽管理

|

||||

* :family_woman_girl_boy: 多用户、用户组、多存储策略

|

||||

* :link: 创建文件、目录的分享链接,可设定自动过期

|

||||

* :eye_speech_bubble: 视频、图像、音频、 ePub 在线预览,文本、Office 文档在线编辑

|

||||

* :art: 自定义配色、黑暗模式、PWA 应用、全站单页应用、国际化支持

|

||||

* :rocket: All-In-One 打包,开箱即用

|

||||

* 🌈 ... ...

|

||||

- :cloud: 支持本机、从机、七牛 Kodo、阿里云 OSS、腾讯云 COS、华为云 OBS、金山云 KS3、又拍云、OneDrive (包括世纪互联版) 、S3 兼容协议 作为存储端

|

||||

- :outbox_tray: 上传/下载 支持客户端直传,支持下载限速

|

||||

- 💾 可对接 Aria2/qBittorrent 离线下载,可使用多个从机节点分担下载任务

|

||||

- 📚 在线 压缩/解压缩/压缩包预览、多文件打包下载

|

||||

- 💻 覆盖全部存储策略的 WebDAV 协议支持

|

||||

- :zap: 拖拽上传、目录上传、并行分片上传

|

||||

- :card_file_box: 提取媒体元数据,通过元数据或标签搜索文件

|

||||

- :family_woman_girl_boy: 多用户、用户组、多存储策略

|

||||

- :link: 创建文件、目录的分享链接,可设定自动过期

|

||||

- :eye_speech_bubble: 视频、图像、音频、 ePub 在线预览,文本、Office 文档在线编辑

|

||||

- :art: 自定义配色、黑暗模式、PWA 应用、全站单页应用、国际化支持

|

||||

- :rocket: All-in-One 打包,开箱即用

|

||||

- 🌈 ... ...

|

||||

|

||||

## :hammer_and_wrench: 部署

|

||||

|

||||

下载适用于您目标机器操作系统、CPU架构的主程序,直接运行即可。

|

||||

你可以参考 [快速开始](https://docs.cloudreve.org/overview/quickstart) 启动一个本地实例进行体验、测试。

|

||||

|

||||

```shell

|

||||

# 解压程序包

|

||||

tar -zxvf cloudreve_VERSION_OS_ARCH.tar.gz

|

||||

|

||||

# 赋予执行权限

|

||||

chmod +x ./cloudreve

|

||||

|

||||

# 启动 Cloudreve

|

||||

./cloudreve

|

||||

```

|

||||

|

||||

以上为最简单的部署示例,您可以参考 [文档 - 起步](https://docs.cloudreve.org/) 进行更为完善的部署。

|

||||

当你准备好将 Cloudreve 部署到生产环境时,可以参考 [部署](https://docs.cloudreve.org/overview/deploy/) 进行完整部署。

|

||||

|

||||

## :gear: 构建

|

||||

|

||||

自行构建前需要拥有 `Go >= 1.18`、`node.js`、`yarn`、`zip`, [goreleaser](https://goreleaser.com/intro/) 等必要依赖。

|

||||

你可以参考 [构建](https://docs.cloudreve.org/overview/build/) 从源代码构建 Cloudreve。

|

||||

|

||||

#### 安装 goreleaser

|

||||

## :rocket: 贡献

|

||||

|

||||

```shell

|

||||

go install github.com/goreleaser/goreleaser@latest

|

||||

```

|

||||

|

||||

#### 克隆代码

|

||||

|

||||

```shell

|

||||

git clone --recurse-submodules https://github.com/cloudreve/Cloudreve.git

|

||||

```

|

||||

|

||||

#### 编译项目

|

||||

|

||||

```shell

|

||||

goreleaser build --clean --single-target --snapshot

|

||||

```

|

||||

如果你有兴趣为 Cloudreve 贡献代码,请参考 [贡献](https://docs.cloudreve.org/api/contributing/) 了解如何贡献。

|

||||

|

||||

## :alembic: 技术栈

|

||||

|

||||

* [Go](https://golang.org/) + [Gin](https://github.com/gin-gonic/gin)

|

||||

* [React](https://github.com/facebook/react) + [Redux](https://github.com/reduxjs/redux) + [Material-UI](https://github.com/mui-org/material-ui)

|

||||

- [Go](https://golang.org/) + [Gin](https://github.com/gin-gonic/gin) + [ent](https://github.com/ent/ent)

|

||||

- [React](https://github.com/facebook/react) + [Redux](https://github.com/reduxjs/redux) + [Material-UI](https://github.com/mui-org/material-ui)

|

||||

|

||||

## :scroll: 许可证

|

||||

|

||||

|

||||

12

SECURITY.md

Normal file

12

SECURITY.md

Normal file

@@ -0,0 +1,12 @@

|

||||

# Security Policy

|

||||

|

||||

## Supported Versions

|

||||

|

||||

* For security issues with high-impacts (e.g. related to payments or user permission), we support 3.8.x and all 4.x version. But the fix for 4.x will released only in latest sub-version.

|

||||

* For all other security issues, we mainly support version >= 4.x (in which `x` is the latest stable sub-version).

|

||||

|

||||

## Reporting a Vulnerability

|

||||

|

||||

Please send the details about the security issue to `support@cloudreve.org`. Once the vulnerability is comfirmed or fixed, you will get updates from the email thread.

|

||||

|

||||

We will reward you with bounty/swag for success submission of securty issues.

|

||||

247

application/application.go

Normal file

247

application/application.go

Normal file

@@ -0,0 +1,247 @@

|

||||

package application

|

||||

|

||||

import (

|

||||

"context"

|

||||

"errors"

|

||||

"fmt"

|

||||

"net"

|

||||

"net/http"

|

||||

_ "net/http/pprof"

|

||||

"os"

|

||||

"time"

|

||||

|

||||

"github.com/cloudreve/Cloudreve/v4/application/constants"

|

||||

"github.com/cloudreve/Cloudreve/v4/application/dependency"

|

||||

"github.com/cloudreve/Cloudreve/v4/ent"

|

||||

"github.com/cloudreve/Cloudreve/v4/pkg/cache"

|

||||

"github.com/cloudreve/Cloudreve/v4/pkg/conf"

|

||||

"github.com/cloudreve/Cloudreve/v4/pkg/crontab"

|

||||

"github.com/cloudreve/Cloudreve/v4/pkg/email"

|

||||

"github.com/cloudreve/Cloudreve/v4/pkg/filemanager/driver/onedrive"

|

||||

"github.com/cloudreve/Cloudreve/v4/pkg/logging"

|

||||

"github.com/cloudreve/Cloudreve/v4/pkg/setting"

|

||||

"github.com/cloudreve/Cloudreve/v4/pkg/util"

|

||||

"github.com/cloudreve/Cloudreve/v4/routers"

|

||||

"github.com/gin-gonic/gin"

|

||||

)

|

||||

|

||||

type Server interface {

|

||||

// Start starts the Cloudreve server.

|

||||

Start() error

|

||||

PrintBanner()

|

||||

Close()

|

||||

}

|

||||

|

||||

// NewServer constructs a new Cloudreve server instance with given dependency.

|

||||

func NewServer(dep dependency.Dep) Server {

|

||||

return &server{

|

||||

dep: dep,

|

||||

logger: dep.Logger(),

|

||||

config: dep.ConfigProvider(),

|

||||

}

|

||||

}

|

||||

|

||||

type server struct {

|

||||

dep dependency.Dep

|

||||

logger logging.Logger

|

||||

dbClient *ent.Client

|

||||

config conf.ConfigProvider

|

||||

server *http.Server

|

||||

pprofServer *http.Server

|

||||

kv cache.Driver

|

||||

mailQueue email.Driver

|

||||

}

|

||||

|

||||

func (s *server) PrintBanner() {

|

||||

fmt.Print(`

|

||||

___ _ _

|

||||

/ __\ | ___ _ _ __| |_ __ _____ _____

|

||||

/ / | |/ _ \| | | |/ _ | '__/ _ \ \ / / _ \

|

||||

/ /___| | (_) | |_| | (_| | | | __/\ V / __/

|

||||

\____/|_|\___/ \__,_|\__,_|_| \___| \_/ \___|

|

||||

|

||||

V` + constants.BackendVersion + ` Commit #` + constants.LastCommit + ` Pro=` + constants.IsPro + `

|

||||

================================================

|

||||

|

||||

`)

|

||||

}

|

||||

|

||||

func (s *server) Start() error {

|

||||

// Debug 关闭时,切换为生产模式

|

||||

if !s.config.System().Debug {

|

||||

gin.SetMode(gin.ReleaseMode)

|

||||

}

|

||||

|

||||

s.kv = s.dep.KV()

|

||||

// delete all cached settings

|

||||

_ = s.kv.Delete(setting.KvSettingPrefix)

|

||||

if memKv, ok := s.kv.(*cache.MemoStore); ok {

|

||||

memKv.GarbageCollect(s.logger)

|

||||

}

|

||||

|

||||

// TODO: make sure redis is connected in dep before user traffic.

|

||||

if s.config.System().Mode == conf.MasterMode {

|

||||

s.dbClient = s.dep.DBClient()

|

||||

// TODO: make sure all dep is initialized before server start.

|

||||

s.dep.LockSystem()

|

||||

s.dep.UAParser()

|

||||

|

||||

// Initialize OneDrive credentials

|

||||

credentials, err := onedrive.RetrieveOneDriveCredentials(context.Background(), s.dep.StoragePolicyClient())

|

||||

if err != nil {

|

||||

return fmt.Errorf("faield to retrieve OneDrive credentials for CredManager: %w", err)

|

||||

}

|

||||

if err := s.dep.CredManager().Upsert(context.Background(), credentials...); err != nil {

|

||||

return fmt.Errorf("failed to upsert OneDrive credentials to CredManager: %w", err)

|

||||

}

|

||||

crontab.Register(setting.CronTypeOauthCredRefresh, func(ctx context.Context) {

|

||||

dep := dependency.FromContext(ctx)

|

||||

cred := dep.CredManager()

|

||||

cred.RefreshAll(ctx)

|

||||

})

|

||||

|

||||

// Initialize email queue before user traffic starts.

|

||||

_ = s.dep.EmailClient(context.Background())

|

||||

|

||||

// Start all queues

|

||||

s.dep.MediaMetaQueue(context.Background()).Start()

|

||||

s.dep.EntityRecycleQueue(context.Background()).Start()

|

||||

s.dep.IoIntenseQueue(context.Background()).Start()

|

||||

s.dep.RemoteDownloadQueue(context.Background()).Start()

|

||||

|

||||

// Start cron jobs

|

||||

c, err := crontab.NewCron(context.Background(), s.dep)

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

c.Start()

|

||||

|

||||

// Start node pool

|

||||

if _, err := s.dep.NodePool(context.Background()); err != nil {

|

||||

return err

|

||||

}

|

||||

} else {

|

||||

s.dep.SlaveQueue(context.Background()).Start()

|

||||

}

|

||||

s.dep.ThumbQueue(context.Background()).Start()

|

||||

|

||||

api := routers.InitRouter(s.dep)

|

||||

api.TrustedPlatform = s.config.System().ProxyHeader

|

||||

s.server = &http.Server{Handler: api}

|

||||

|

||||

// Start pprof server if configured

|

||||

if pprofAddr := s.config.System().Pprof; pprofAddr != "" {

|

||||

s.pprofServer = &http.Server{

|

||||

Addr: pprofAddr,

|

||||

Handler: http.DefaultServeMux,

|

||||

}

|

||||

go func() {

|

||||

s.logger.Info("pprof server listening on %q", pprofAddr)

|

||||

if err := s.pprofServer.ListenAndServe(); err != nil && !errors.Is(err, http.ErrServerClosed) {

|

||||

s.logger.Error("pprof server error: %s", err)

|

||||

}

|

||||

}()

|

||||

}

|

||||

|

||||

// 如果启用了SSL

|

||||

if s.config.SSL().CertPath != "" {

|

||||

s.logger.Info("Listening to %q", s.config.SSL().Listen)

|

||||

s.server.Addr = s.config.SSL().Listen

|

||||

if err := s.server.ListenAndServeTLS(s.config.SSL().CertPath, s.config.SSL().KeyPath); err != nil && !errors.Is(err, http.ErrServerClosed) {

|

||||

return fmt.Errorf("failed to listen to %q: %w", s.config.SSL().Listen, err)

|

||||

}

|

||||

|

||||

return nil

|

||||

}

|

||||

|

||||

// 如果启用了Unix

|

||||

if s.config.Unix().Listen != "" {

|

||||

// delete socket file before listening

|

||||

if _, err := os.Stat(s.config.Unix().Listen); err == nil {

|

||||

if err = os.Remove(s.config.Unix().Listen); err != nil {

|

||||

return fmt.Errorf("failed to delete socket file %q: %w", s.config.Unix().Listen, err)

|

||||

}

|

||||

}

|

||||

|

||||

s.logger.Info("Listening to %q", s.config.Unix().Listen)

|

||||

if err := s.runUnix(s.server); err != nil && !errors.Is(err, http.ErrServerClosed) {

|

||||

return fmt.Errorf("failed to listen to %q: %w", s.config.Unix().Listen, err)

|

||||

}

|

||||

|

||||

return nil

|

||||

}

|

||||

|

||||

s.logger.Info("Listening to %q", s.config.System().Listen)

|

||||

s.server.Addr = s.config.System().Listen

|

||||

if err := s.server.ListenAndServe(); err != nil && !errors.Is(err, http.ErrServerClosed) {

|

||||

return fmt.Errorf("failed to listen to %q: %w", s.config.System().Listen, err)

|

||||

}

|

||||

return nil

|

||||

}

|

||||

|

||||

func (s *server) Close() {

|

||||

if s.dbClient != nil {

|

||||

s.logger.Info("Shutting down database connection...")

|

||||

if err := s.dbClient.Close(); err != nil {

|

||||

s.logger.Error("Failed to close database connection: %s", err)

|

||||

}

|

||||

}

|

||||

|

||||

ctx := context.Background()

|

||||

if conf.SystemConfig.GracePeriod != 0 {

|

||||

var cancel context.CancelFunc

|

||||

ctx, cancel = context.WithTimeout(ctx, time.Duration(s.config.System().GracePeriod)*time.Second)

|

||||

defer cancel()

|

||||

}

|

||||

|

||||

s.dep.EventHub().Close()

|

||||

|

||||

// Shutdown http server

|

||||

if s.server != nil {

|

||||

err := s.server.Shutdown(ctx)

|

||||

if err != nil {

|

||||

s.logger.Error("Failed to shutdown server: %s", err)

|

||||

}

|

||||

}

|

||||

|

||||

// Shutdown pprof server

|

||||

if s.pprofServer != nil {

|

||||

if err := s.pprofServer.Shutdown(ctx); err != nil {

|

||||

s.logger.Error("Failed to shutdown pprof server: %s", err)

|

||||

}

|

||||

}

|

||||

|

||||

if s.kv != nil {

|

||||

if err := s.kv.Persist(util.DataPath(cache.DefaultCacheFile)); err != nil {

|

||||

s.logger.Warning("Failed to persist cache: %s", err)

|

||||

}

|

||||

}

|

||||

|

||||

if err := s.dep.Shutdown(ctx); err != nil {

|

||||

s.logger.Warning("Failed to shutdown dependency manager: %s", err)

|

||||

}

|

||||

}

|

||||

|

||||

func (s *server) runUnix(server *http.Server) error {

|

||||

listener, err := net.Listen("unix", s.config.Unix().Listen)

|

||||

if err != nil {

|

||||

return err

|